MATLAB人工神经网络.docx

MATLAB人工神经网络.docx

- 文档编号:11568856

- 上传时间:2023-03-19

- 格式:DOCX

- 页数:12

- 大小:498.16KB

MATLAB人工神经网络.docx

《MATLAB人工神经网络.docx》由会员分享,可在线阅读,更多相关《MATLAB人工神经网络.docx(12页珍藏版)》请在冰豆网上搜索。

MATLAB人工神经网络

ArtificialNeuralNetworks

Abstract:

TheArtificialNeuralNetwork(ANN)isafunctionalimitationofsimplifiedmodelofthebiologicalneuronsandtheirgoalistoconstructuseful‘computers’forreal-worldproblemsandreproduceintelligentdataevaluationtechniqueslikepatternrecognition,classificationandgeneralizationbyusingsimple,distributedandrobustprocessingunitscalledartificialneurons.

Thispaperwillpresentasimpleapplicationoftheartificialneuralnetwork:

process,designandperformanceanalysis.

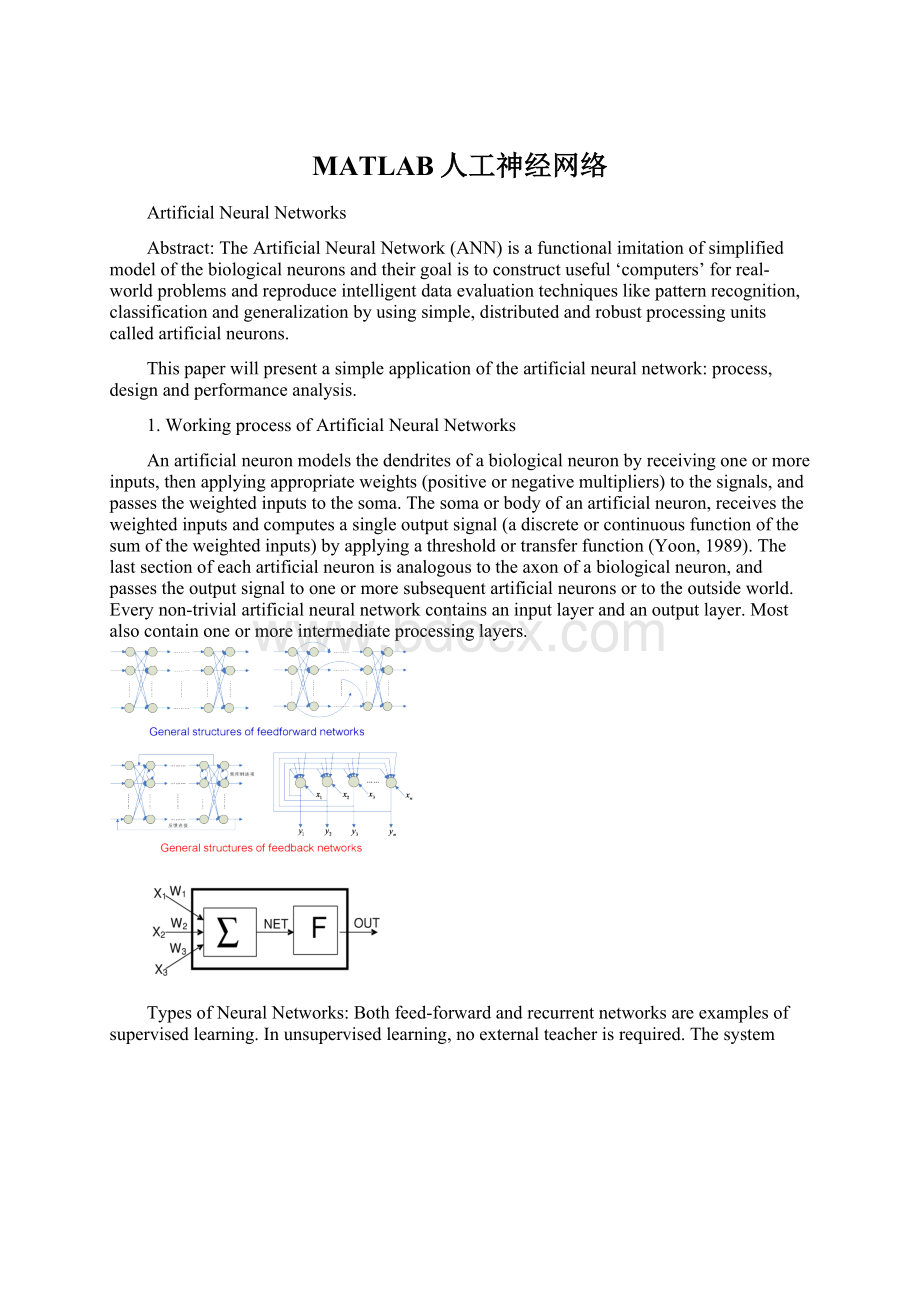

1.WorkingprocessofArtificialNeuralNetworks

Anartificialneuronmodelsthedendritesofabiologicalneuronbyreceivingoneormoreinputs,thenapplyingappropriateweights(positiveornegativemultipliers)tothesignals,andpassestheweightedinputstothesoma.Thesomaorbodyofanartificialneuron,receivestheweightedinputsandcomputesasingleoutputsignal(adiscreteorcontinuousfunctionofthesumoftheweightedinputs)byapplyingathresholdortransferfunction(Yoon,1989).Thelastsectionofeachartificialneuronisanalogoustotheaxonofabiologicalneuron,andpassestheoutputsignaltooneormoresubsequentartificialneuronsortotheoutsideworld.Everynon-trivialartificialneuralnetworkcontainsaninputlayerandanoutputlayer.Mostalsocontainoneormoreintermediateprocessinglayers.

TypesofNeuralNetworks:

Bothfeed-forwardandrecurrentnetworksareexamplesofsupervisedlearning.Inunsupervisedlearning,noexternalteacherisrequired.Thesystemself-organizestheinputdata,discoveringforitselftheregularitiesandcollectivepropertiesofthedata.

Thesefeed-forwardnetworkshavetheabilitytolearn.Todoso,anartificialneuralnetworkmustlearntoproduceadesiredoutputbymodifyingtheweightsfromitsinputs.Theprocessofhowthisisdoneissimple.

2.Problems

A.

9trainingsamples,361testingsamples.

B.

9trainingsamples,361testingsamples.

C.

11*11trainingsamples,41*41testingsamples.

3.Designing

WeightedSum

activationfunction

errorfunction

Step1:

initializetheweightparametersandotherparameters

defaultpoints=50;%%隐含层节点数

inputpoints=2;%%输入层节点数

outputpoints=2;%%输出层节点数

Testerror=zeros(1,100);%每个测试点的误差记录

a=zeros(1,inputpoints);%输入层节点值

y=zeros(1,outputpoints);%样本节点输出值

w=zeros(inputpoints,defaultpoints);%输入层和隐含层权值

%初始化权重很重要,比如用rand函数初始化则效果非常不确定,不如用zeros初始化

v=zeros(defaultpoints,outputpoints);%隐含层和输出层权值

bin=rand(1,defaultpoints);%隐含层输入

bout=rand(1,defaultpoints);%隐含层输出

base1=0*ones(1,defaultpoints);%隐含层阈值,初始化为0

cin=rand(1,outputpoints);%输出层输入

cout=rand(1,outputpoints);%输出层输出

base2=0*rand(1,outputpoints);%%输出层阈值

error=zeros(1,outputpoints);%拟合误差

errors=0;error_sum=0;%误差累加和

error_rate_cin=rand(defaultpoints,outputpoints);%误差对输出层节点权值的导数

error_rate_bin=rand(inputpoints,defaultpoints);%误差对输入层节点权值的导数

alfa=1;%%%%alfa是隐含层和输出层权值-误差变化率的系数,影响很大

belt=0.5;%%%%belt是隐含层和输入层权值-误差变化率的系数,影响较小

gama=3;%%%%gama是误差放大倍数,可以影响跟随速度和拟合精度

trainingROUND=5;%训练次数,有时训练几十次比训练几百次上千次效果要好

sampleNUM=100;%样本点数

x1=zeros(sampleNUM,inputpoints);%样本输入矩阵

y1=zeros(sampleNUM,outputpoints);%样本输出矩阵

x2=zeros(sampleNUM,inputpoints);%测试输入矩阵

y2=zeros(sampleNUM,outputpoints);%测试输出矩阵

observeOUT=zeros(sampleNUM,outputpoints);%%拟合输出监测点矩阵

i=0;j=0;k=0;%%%%其中j是在一个训练周期中的样本点序号,不可引用

i=0;h=0;o=0;%%%%输入层序号,隐含层序号,输出层序号

x=0:

0.1:

50;%%%%步长

Step2:

selectsampleinputandoutput

forj=1:

sampleNUM%这里给样本输入和输出赋值,应根据具体应用来设定

x1(j,1)=x(j);

x2(j,1)=0.3245*x(2*j)*x(j);

temp=rand(1,1);

x1(j,2)=x(j);

x2(j,2)=0.3*x(j);

y1(j,1)=sin(x1(j,1));

y1(j,2)=cos(x1(j,2))*cos(x1(j,2));

y2(j,1)=sin(x2(j,1));

y2(j,2)=cos(x2(j,2))*cos(x2(j,2));

end

foro=1:

outputpoints

y1(:

o)=(y1(:

o)-min(y1(:

o)))/(max(y1(:

o))-min(y1(:

o)));

%归一化,使得输出范围落到[0,1]区间上,当激活函数为对数S型时适用

y2(:

o)=(y2(:

o)-min(y2(:

o)))/(max(y2(:

o))-min(y2(:

o)));

end

fori=1:

inputpoints

x1(:

i)=(x1(:

i)-min(x1(:

i)))/(max(x1(:

i))-min(x1(:

i)));

%输入数据归一化范围要和输出数据的范围相同,[0,1]

x2(:

i)=(x2(:

i)-min(x2(:

i)))/(max(x2(:

i))-min(x2(:

i)));

end

fori=1:

inputpoints%%%%%样本输入层赋值

a(i)=x1(j,i);

end

foro=1:

outputpoints%%%%%样本输出层赋值

y(o)=y1(j,o);

end

Step3:

computetheinputandoutputoftheneuralnetworkhiddenlayer

forh=1:

defaultpoints

bin(h)=0;

fori=1:

inputpoints

bin(h)=bin(h)+a(i)*w(i,h);

end

bin(h)=bin(h)-base1(h);

bout(h)=1/(1+exp(-bin(h)));%%%%%%隐含层激励函数为对数激励

end

Step4:

computetheinputandoutputoftheneuralnetworkoutputlayer,computetheerrorfunction’spartialderivativesforeachneuronoftheoutputlayer.

temp_error=0;

foro=1:

outputpoints

cin(o)=0;

forh=1:

defaultpoints

cin(o)=cin(o)+bout(h)*v(h,o);

end

cin(o)=cin(o)-base2(o);

cout(o)=1/(1+exp(-cin(o)));%%%%%%输出层激励函数为对数激励

observeOUT(j,o)=cout(o);

error(o)=y(o)-cout(o);

temp_error=temp_error+error(o)*error(o);%%%%%记录实际误差,不应该乘伽玛系数

error(o)=gama*error(o);

end

Testerror(j)=temp_error;

error_sum=error_sum+Testerror(j);

foro=1:

outputpoints

error_rate_cin(o)=error(o)*cout(o)*(1-cout(o));

end

Step5:

computetheerrorfunction’spartialderivativesforeachneuronofthehiddenlayer,usingtheerrorsandweights

forh=1:

defaultpoints

error_rate_bin(h)=0;

foro=1:

outputpoints

error_rate_bin(h)=error_rate_bin(h)+error_rate_cin(o)*v(h,o);

end

error_rate_bin(h)=error_rate_bin(h)*bout(h)*(1-bout(h));

end

Step6:

modifythelinkweightsofhiddenlayerandoutputlayer,andhiddenlayerandinputlayer.

forh=1:

defaultpoints

base1(h)=base1(h)-5*error_rate_bin(h)*bin(h);

foro=1:

outputpoints

v(h,o)=v(h,o)+alfa*error_rate_cin(o)*bout(h);%

%base1(i)=base1(i)+0.01*alfa*error(i);

end

fori=1:

inputpoints

w(i,h)=w(i,h)+belt*error_rate_bin(h)*a(i);%

%base2=base2+0.01*belt*out_error;

end

end

Step7:

computetheoverallerrorsum.

temp_error=temp_error+error(o)*error(o);

Testerror(j)=temp_error;

error_sum=error_sum+Testerror(j);

Step8:

judgewhethertheerrorratesatisfytheprecision,ifitdoesthenreturn,elsegobacktostep3untilitexceedthelimittime.

parameterdesigning

1.50hiddennodes,whenalfa*gama=3,theerrorsumisleast,beltonlyinfluencealittle.

2.100hiddennodes,whenalfa*gama=1.5,theerrorsumisleast,beltonlyinfluencealittle.

Andtheminimumerrorsumisapproximatelythesameas50hiddennodes.

3.200hiddennodes,whenalfa*gama=0.7,theerrorsumisleast,beltonlyinfluencealittle.

Andtheminimumerrorsumisapproximatelythesameas50hiddennodes.

4.base1influencetheminimumerrorsumverylittle,butitdoeshelpstabilizethesystem.

4.Performanceanalysis

A50hiddenpoints,trainingfor200times,testthenetworkwithtestingsamples,theshapeisbasicallyexpected,andtheerrorsumis1.49.

10hiddenpoints,trainingfor200times,testthenetworkwithtestingsamples,theshapeisbasicallyexpected,andtheerrorsumis0.89416.

20hiddenpoints,trainingfor200times,testthenetworkwithtestingsamples,theshapeisbasicallyexpected,andtheerrorsumis0.89416.

ProblemB:

20hiddenpoints,trainingfor200times,testthenetworkwithtestingsamples,theshapeisbasicallyexpected,andtheerrorsumis2.3833.

alfa=0.5;belt=0.5;gama=3;learningrate:

alfa*gama=1.5

Fromthefirstandsecondfigure,wecometotheconclusion:

ANNwithmorehiddenpointsperformancesbetter;

Fromfigure2~4,wecometotheconclusion:

thisANNperformancebestatthelearningrate1.5.

ProblemC:

Fromthetwofigure,wecanseethatwithalltheotherparametersthesame,ANNwith50hiddennodesonlyhasanerrorsumof0.89102,butANNwith20hiddennodeshasanerrorsumof1.8654.Thus,wecancometoaconlusionthatANNwithmorehiddennodesperformancesbetter.

Fromthetwofigures,ANNwithtrainingtimeof10producesthesameerrorsum(0.89)astrainingtimeof100.

5.Conclusion

ArtificialneuralnetworksareacompletelydifferentapproachtoAIinprocessinginformation.Insteadofbeingprogrammedexactlywhatdotostepbystep,theyaretrainedwithsampledata.Theycanthenusethispreviousdatatoclassifynewthingsinwaysthatwouldbedifficultforhumanstodo.Theycanbeverypowerfulclassificationtoolssincetheyarecapableofhandlingmassiveamountsofdatathroughparallelprocessing.Learningisaccomplishedsupervisedandunsupervisedmodels.Thealgorithmicadvancestoneuralnetworkshaveledtoadvancementsinmanyfieldslikemodelsimulationandpatternrecognition.Thus,duetothewidevarietyofhigh-complexityproblemsneuralnetworkscansolvetheyareawidelyresearchedareaandhavemanypromisingcapabilities.

- 配套讲稿:

如PPT文件的首页显示word图标,表示该PPT已包含配套word讲稿。双击word图标可打开word文档。

- 特殊限制:

部分文档作品中含有的国旗、国徽等图片,仅作为作品整体效果示例展示,禁止商用。设计者仅对作品中独创性部分享有著作权。

- 关 键 词:

- MATLAB 人工 神经网络

冰豆网所有资源均是用户自行上传分享,仅供网友学习交流,未经上传用户书面授权,请勿作他用。

冰豆网所有资源均是用户自行上传分享,仅供网友学习交流,未经上传用户书面授权,请勿作他用。

铝散热器项目年度预算报告.docx

铝散热器项目年度预算报告.docx