基于Hadoop生态圈的数据仓库实践进阶技术十.docx

基于Hadoop生态圈的数据仓库实践进阶技术十.docx

- 文档编号:23801443

- 上传时间:2023-05-20

- 格式:DOCX

- 页数:27

- 大小:194.25KB

基于Hadoop生态圈的数据仓库实践进阶技术十.docx

《基于Hadoop生态圈的数据仓库实践进阶技术十.docx》由会员分享,可在线阅读,更多相关《基于Hadoop生态圈的数据仓库实践进阶技术十.docx(27页珍藏版)》请在冰豆网上搜索。

基于Hadoop生态圈的数据仓库实践进阶技术十

基于Hadoop生态圈的数据仓库实践——进阶技术(十)

十、杂项维度

本节讨论杂项维度。

简单地说,杂项维度就是一种包含的数据具有很少可能值的维度。

例如销售订单,它可能有很多离散数据(yes-no这种类型的值),如

verification_ind(如果订单已经被审核,值为yes)

credit_check_flag(表示此订单的客户信用状态是否已经检查)

new_customer_ind(如果这是新客户的首个订单,值为yes)

web_order_flag(表示此订单是否是在线下的订单)

这类数据常被用于增强销售分析,其特点是属性可能很多但每种属性的可能值很少,适合用称为杂项维度的特殊维度类型存储。

1.新增销售订单属性杂项维度

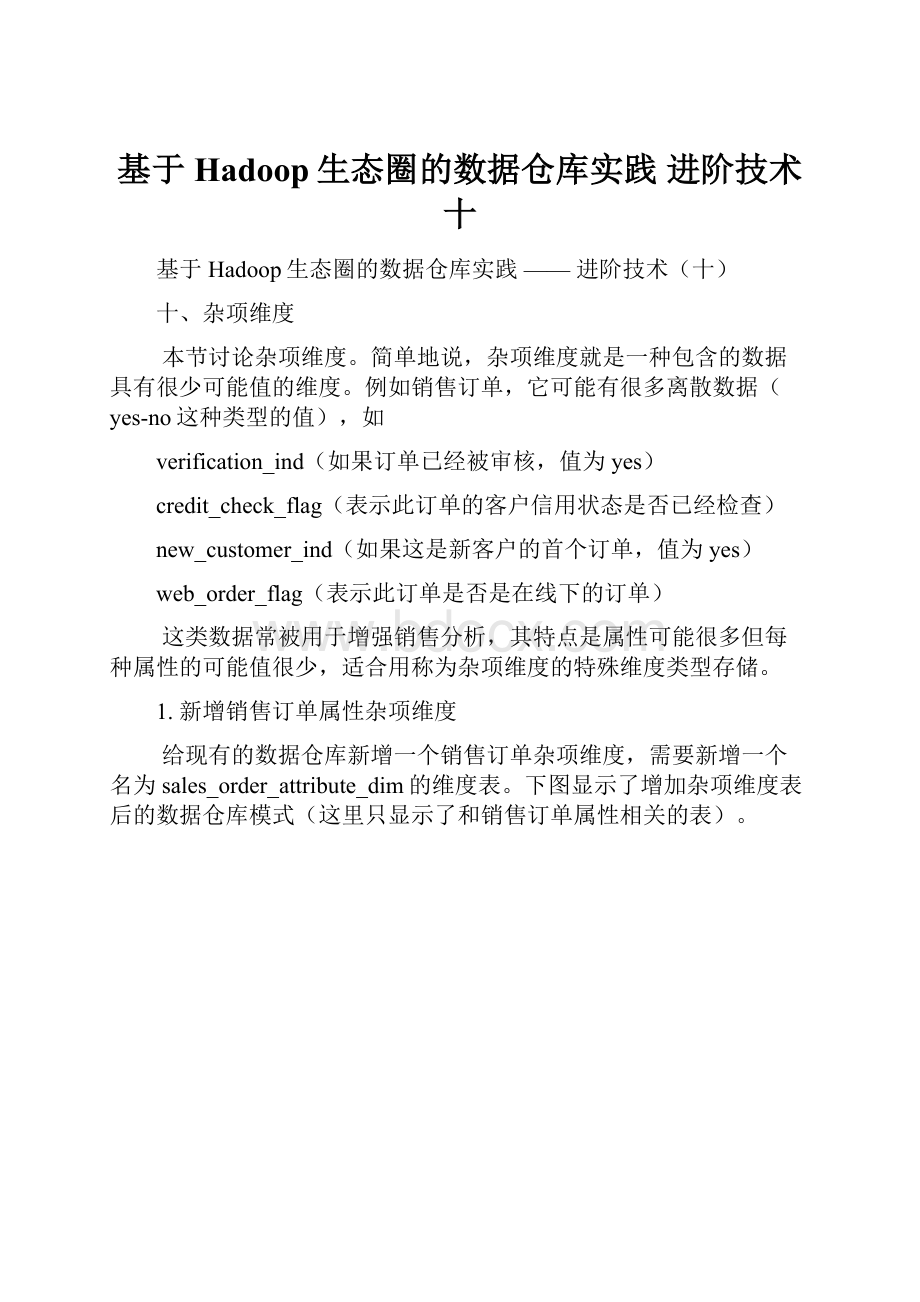

给现有的数据仓库新增一个销售订单杂项维度,需要新增一个名为sales_order_attribute_dim的维度表。

下图显示了增加杂项维度表后的数据仓库模式(这里只显示了和销售订单属性相关的表)。

新的维度表包括四个yes-no列:

verification_ind、credit_check_flag、new_customer_ind和web_order_flag。

每个列可以有两个可能值中的一个(Y或N),因此sales_order_attribute_dim表最多有16(2^4)行。

可以预装载这个维度,并且只需装载一次。

注意,如果知道某种组合是不可能出现的,就不需要装载这种组合。

执行下面的脚本修改数据库模式。

这个脚本做了四项工作:

建立sales_order_attribute_dim表,向表中预装载全部16种可能的数据,给销售订单事实表添加杂项维度代理键,给源数据库里的sales_order表增加对应的四个属性列。

[sql]viewplaincopy

USEdw;

--建立杂项维度表

CREATETABLEsales_order_attribute_dim(

sales_order_attribute_skINTCOMMENT'salesorderattributesurrogatekey',

verification_indCHAR

(1)COMMENT'verificationindex,YorN',

credit_check_flagCHAR

(1)COMMENT'creditcheckflag,YorN',

new_customer_indCHAR

(1)COMMENT'newcustomerindex,YorN',

web_order_flagCHAR

(1)COMMENT'weborderflag,YorN',

versionintCOMMENT'version',

effective_dateDATECOMMENT'effectivedate',

expiry_dateDATECOMMENT'expirydate'

)

clusteredby(sales_order_attribute_sk)into8buckets

storedasorctblproperties('transactional'='true');

--生成杂项维度数据

INSERTINTOsales_order_attribute_dimVALUES(1,'Y','N','N','N',1,'1900-00-00','2200-01-01');

INSERTINTOsales_order_attribute_dimVALUES(2,'Y','Y','N','N',1,'1900-00-00','2200-01-01');

INSERTINTOsales_order_attribute_dimVALUES(3,'Y','Y','Y','N',1,'1900-00-00','2200-01-01');

INSERTINTOsales_order_attribute_dimVALUES(4,'Y','Y','Y','Y',1,'1900-00-00','2200-01-01');

INSERTINTOsales_order_attribute_dimVALUES(5,'Y','N','Y','N',1,'1900-00-00','2200-01-01');

INSERTINTOsales_order_attribute_dimVALUES(6,'Y','N','Y','Y',1,'1900-00-00','2200-01-01');

INSERTINTOsales_order_attribute_dimVALUES(7,'Y','N','N','Y',1,'1900-00-00','2200-01-01');

INSERTINTOsales_order_attribute_dimVALUES(8,'Y','Y','N','Y',1,'1900-00-00','2200-01-01');

INSERTINTOsales_order_attribute_dimVALUES(9,'N','N','N','N',1,'1900-00-00','2200-01-01');

INSERTINTOsales_order_attribute_dimVALUES(10,'N','Y','N','N',1,'1900-00-00','2200-01-01');

INSERTINTOsales_order_attribute_dimVALUES(11,'N','Y','Y','N',1,'1900-00-00','2200-01-01');

INSERTINTOsales_order_attribute_dimVALUES(12,'N','Y','Y','Y',1,'1900-00-00','2200-01-01');

INSERTINTOsales_order_attribute_dimVALUES(13,'N','N','Y','N',1,'1900-00-00','2200-01-01');

INSERTINTOsales_order_attribute_dimVALUES(14,'N','N','Y','Y',1,'1900-00-00','2200-01-01');

INSERTINTOsales_order_attribute_dimVALUES(15,'N','N','N','Y',1,'1900-00-00','2200-01-01');

INSERTINTOsales_order_attribute_dimVALUES(16,'N','Y','N','Y',1,'1900-00-00','2200-01-01');

--建立杂项维度外键

altertablesales_order_factrenametosales_order_fact_old;

createtablesales_order_fact(

order_numberintCOMMENT'ordernumber',

customer_skintCOMMENT'customersurrogatekey',

product_skintCOMMENT'productsurrogatekey',

sales_order_attribute_skintCOMMENT'salesorderattributesurrogatekey',

order_date_skintCOMMENT'orderdatesurrogatekey',

allocate_date_skintCOMMENT'allocatedatesurrogatekey',

allocate_quantityintCOMMENT'allocatequantity',

packing_date_skintCOMMENT'packingdatesurrogatekey',

packing_quantityintCOMMENT'packingquantity',

ship_date_skintCOMMENT'shipdatesurrogatekey',

ship_quantityintCOMMENT'shipquantity',

receive_date_skintCOMMENT'receivedatesurrogatekey',

receive_quantityintCOMMENT'receivequantity',

request_delivery_date_skintCOMMENT'requestdeliverydatesurrogatekey',

order_amountdecimal(10,2)COMMENT'orderamount',

order_quantityintCOMMENT'orderquantity')

clusteredby(order_number)into8buckets

storedasorctblproperties('transactional'='true');

insertintotablesales_order_fact

selectorder_number,

customer_sk,

product_sk,

null,

order_date_sk,

allocate_date_sk,

allocate_quantity,

packing_date_sk,

packing_quantity,

ship_date_sk,

ship_quantity,

receive_date_sk,

receive_quantity,

request_delivery_date_sk,

order_amount,

order_quantity

fromsales_order_fact_old;

droptablesales_order_fact_old;

--给源库的销售订单表增加对应的属性

USEsource;

ALTERTABLEsales_order

ADDverification_indCHAR

(1)AFTERproduct_code

ADDcredit_check_flagCHAR

(1)AFTERverification_ind

ADDnew_customer_indCHAR

(1)AFTERcredit_check_flag

ADDweb_order_flagCHAR

(1)AFTERnew_customer_ind;

--给销售订单过渡表增加对应的属性

USErds;

ALTERTABLEsales_orderADDCOLUMNS

(

verification_indCHAR

(1)COMMENT'verificationindex,YorN',

credit_check_flagCHAR

(1)COMMENT'creditcheckflag,YorN',

new_customer_indCHAR

(1)COMMENT'newcustomerindex,YorN',

web_order_flagCHAR

(1)COMMENT'weborderflag,YorN'

);

2.重建Sqoop作业

[plain]viewplaincopy在CODE上查看代码片派生到我的代码片

last_value=`sqoopjob--showmyjob_incremental_import--meta-connectjdbc:

hsqldb:

hsql:

//cdh2:

16000/sqoop|grepincremental.last.value|awk'{print$3}'`

sqoopjob--deletemyjob_incremental_import--meta-connectjdbc:

hsqldb:

hsql:

//cdh2:

16000/sqoop

sqoopjob\

--meta-connectjdbc:

hsqldb:

hsql:

//cdh2:

16000/sqoop\

--createmyjob_incremental_import\

--\

import\

--connect"jdbc:

mysql:

//cdh1:

3306/source?

useSSL=false&user=root&password=mypassword"\

--tablesales_order\

--columns"order_number,customer_number,product_code,status_date,entry_date,order_amount,quantity,request_delivery_date,order_status,verification_ind,credit_check_flag,new_customer_ind,web_order_flag"\

--hive-import\

--hive-tablerds.sales_order\

--incrementalappend\

--check-columnid\

--last-value$last_value

3.修改定期装载脚本

由于有了一个新的维度,必须修改定期装载脚本。

下面显示了修改后的regular_etl.sql脚本文件内容。

[sql]viewplaincopy在CODE上查看代码片派生到我的代码片

--设置变量以支持事务

sethive.support.concurrency=true;

sethive.exec.dynamic.partition.mode=nonstrict;

sethive.txn.manager=org.apache.hadoop.hive.ql.lockmgr.DbTxnManager;

setpactor.initiator.on=true;

setpactor.worker.threads=1;

USEdw;

--设置SCD的生效时间和过期时间

SEThivevar:

cur_date=CURRENT_DATE();

SEThivevar:

pre_date=DATE_ADD(${hivevar:

cur_date},-1);

SEThivevar:

max_date=CAST('2200-01-01'ASDATE);

--设置CDC的上限时间

INSERTOVERWRITETABLErds.cdc_timeSELECTlast_load,${hivevar:

cur_date}FROMrds.cdc_time;

--装载customer维度

--设置已删除记录和地址相关列上SCD2的过期,用<=>运算符处理NULL值。

UPDATEcustomer_dim

SETexpiry_date=${hivevar:

pre_date}

WHEREcustomer_dim.customer_skIN

(SELECTa.customer_sk

FROM(SELECTcustomer_sk,

customer_number,

customer_street_address,

customer_zip_code,

customer_city,

customer_state,

shipping_address,

shipping_zip_code,

shipping_city,

shipping_state

FROMcustomer_dimWHEREexpiry_date=${hivevar:

max_date})aLEFTJOIN

rds.customerbONa.customer_number=b.customer_number

WHEREb.customer_numberISNULLOR

(!

(a.customer_street_address<=>b.customer_street_address)

OR!

(a.customer_zip_code<=>b.customer_zip_code)

OR!

(a.customer_city<=>b.customer_city)

OR!

(a.customer_state<=>b.customer_state)

OR!

(a.shipping_address<=>b.shipping_address)

OR!

(a.shipping_zip_code<=>b.shipping_zip_code)

OR!

(a.shipping_city<=>b.shipping_city)

OR!

(a.shipping_state<=>b.shipping_state)

));

--处理customer_street_addresses列上SCD2的新增行

INSERTINTOcustomer_dim

SELECT

ROW_NUMBER()OVER(ORDERBYt1.customer_number)+t2.sk_max,

t1.customer_number,

t1.customer_name,

t1.customer_street_address,

t1.customer_zip_code,

t1.customer_city,

t1.customer_state,

t1.shipping_address,

t1.shipping_zip_code,

t1.shipping_city,

t1.shipping_state,

t1.version,

t1.effective_date,

t1.expiry_date

FROM

(

SELECT

t2.customer_numbercustomer_number,

t2.customer_namecustomer_name,

t2.customer_street_addresscustomer_street_address,

t2.customer_zip_codecustomer_zip_code,

t2.customer_citycustomer_city,

t2.customer_statecustomer_state,

t2.shipping_addressshipping_address,

t2.shipping_zip_codeshipping_zip_code,

t2.shipping_cityshipping_city,

t2.shipping_stateshipping_state,

t1.version+1version,

${hivevar:

pre_date}effective_date,

${hivevar:

max_date}expiry_date

FROMcustomer_dimt1

INNERJOINrds.customert2

ONt1.customer_number=t2.customer_number

ANDt1.expiry_date=${hivevar:

pre_date}

LEFTJOINcustomer_dimt3

ONt1.customer_number=t3.customer_number

ANDt3.expiry_date=${hivevar:

max_date}

WHERE(!

(t1.customer_street_address<=>t2.customer_street_address)

OR!

(t1.customer_zip_code<=>t2.customer_zip_code)

OR!

(t1.customer_city<=>t2.customer_city)

OR!

(t1.customer_state<=>t2.customer_state)

OR!

(t1.shipping_address<=>t2.shipping_address)

OR!

(t1.shipping_zip_code<=>t2.shipping_zip_code)

OR!

(t1.shipping_city<=>t2.shipping_city)

OR!

(t1.shipping_state<=>t2.shipping_state)

)

ANDt3.customer_skISNULL)t1

CROSSJOIN

(SELECTCOALESCE(MAX(customer_sk),0)sk_maxFROMcustomer_dim)t2;

--处理customer_name列上的SCD1

--因为hive的update的set子句还不支持子查询,所以这里使用了一个临时表存储需要更新的记录,用先delete再insert代替update

--因为SCD1本身就不保存历史数据,所以这里更新维度表里的所有customer_name改变的记录,而不是仅仅更新当前版本的记录

DROPTABLEIFEXISTStmp;

CREATETABLEtmpAS

SELECT

a.customer_sk,

a.customer_number,

b.customer_name,

a.customer_street_address,

a.customer_zip_code,

a.customer_city,

a.customer_state,

a.shipping_address,

a.sh

- 配套讲稿:

如PPT文件的首页显示word图标,表示该PPT已包含配套word讲稿。双击word图标可打开word文档。

- 特殊限制:

部分文档作品中含有的国旗、国徽等图片,仅作为作品整体效果示例展示,禁止商用。设计者仅对作品中独创性部分享有著作权。

- 关 键 词:

- 基于Hadoop生态圈的数据仓库实践 进阶技术十 基于 Hadoop 生态 数据仓库 实践 进阶 技术

冰豆网所有资源均是用户自行上传分享,仅供网友学习交流,未经上传用户书面授权,请勿作他用。

冰豆网所有资源均是用户自行上传分享,仅供网友学习交流,未经上传用户书面授权,请勿作他用。

《公务员财产申报制度》.docx

《公务员财产申报制度》.docx