BP网络实现函数逼近.docx

BP网络实现函数逼近.docx

- 文档编号:3729766

- 上传时间:2022-11-25

- 格式:DOCX

- 页数:10

- 大小:31.06KB

BP网络实现函数逼近.docx

《BP网络实现函数逼近.docx》由会员分享,可在线阅读,更多相关《BP网络实现函数逼近.docx(10页珍藏版)》请在冰豆网上搜索。

BP网络实现函数逼近

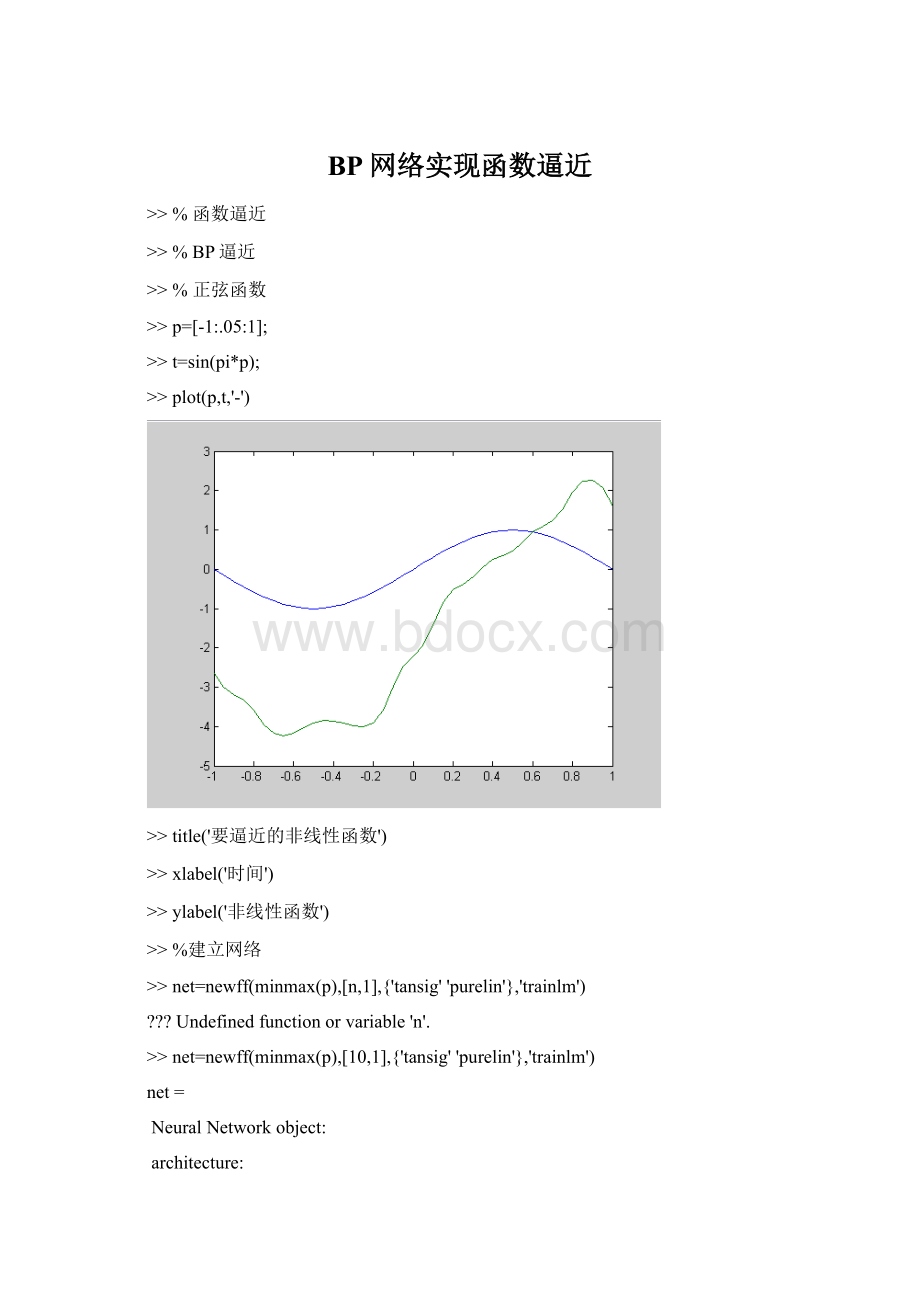

>>%函数逼近

>>%BP逼近

>>%正弦函数

>>p=[-1:

.05:

1];

>>t=sin(pi*p);

>>plot(p,t,'-')

>>title('要逼近的非线性函数')

>>xlabel('时间')

>>ylabel('非线性函数')

>>%建立网络

>>net=newff(minmax(p),[n,1],{'tansig''purelin'},'trainlm')

?

?

?

Undefinedfunctionorvariable'n'.

>>net=newff(minmax(p),[10,1],{'tansig''purelin'},'trainlm')

net=

NeuralNetworkobject:

architecture:

numInputs:

1

numLayers:

2

biasConnect:

[1;1]

inputConnect:

[1;0]

layerConnect:

[00;10]

outputConnect:

[01]

targetConnect:

[01]

numOutputs:

1(read-only)

numTargets:

1(read-only)

numInputDelays:

0(read-only)

numLayerDelays:

0(read-only)

subobjectstructures:

inputs:

{1x1cell}ofinputs

layers:

{2x1cell}oflayers

outputs:

{1x2cell}containing1output

targets:

{1x2cell}containing1target

biases:

{2x1cell}containing2biases

inputWeights:

{2x1cell}containing1inputweight

layerWeights:

{2x2cell}containing1layerweight

functions:

adaptFcn:

'trains'

gradientFcn:

'calcjx'

initFcn:

'initlay'

performFcn:

'mse'

trainFcn:

'trainlm'

parameters:

adaptParam:

.passes

gradientParam:

(none)

initParam:

(none)

performParam:

(none)

trainParam:

.epochs,.goal,.max_fail,.mem_reduc,

.min_grad,.mu,.mu_dec,.mu_inc,

.mu_max,.show,.time

weightandbiasvalues:

IW:

{2x1cell}containing1inputweightmatrix

LW:

{2x2cell}containing1layerweightmatrix

b:

{2x1cell}containing2biasvectors

other:

userdata:

(userinformation)

>>y1=sim(net,p)

y1=

Columns1through3

-2.639884443340668-3.003073712383436-3.194033204190848

Columns4through6

-3.334757182601289-3.594283021881933-3.952800035494339

Columns7through9

-4.173********4227-4.229926693495091-4.177********4165

Columns10through12

-4.033732169794869-3.900855790599750-3.848671787584546

Columns13through15

-3.854702861751757-3.910085003672952-3.983346156145338

Columns16through18

-4.002511976178147-3.912787247900780-3.589914393048505

Columns19through21

-2.981491918128088-2.464572246101413-2.201819415111595

Columns22through24

-1.943687145980234-1.441761719033607-0.848860143340555

Columns25through27

-0.519606766384197-0.369775095602761-0.200360969293050

Columns28through30

.0514********

Columns31through33

0.462714*********0.6930997180450130.946621054592838

Columns34through36

1.0957922250834171.2303740073908261.514984692127723

Columns37through39

1.9476734815980372.2310977637565932.273023421136330

Columns40through41

.0772********

>>figure

>>plot(p,t,'-',p,y1,'-')

>>plot(p,t,'-',p,y1,'-')

>>plot(p,t,'-',p,y1,'-')

>>figure

>>plot(p,t,'-',p,y1,'-')

>>title('没有训练的输出结果')

>>alabel('时间')

*WARNING*ALABELisanobsoletefunction.

UseXLABEL,YLABEL,TITLE,andAXIS.

TypeNNTWARNOFFtosuppressNNTwarningmessages.

>>xlabel('时间')

>>ylabel('仿真输出--原函数-')

>>%设置训练参数

>>net.trainParam.epochs=50

net=

NeuralNetworkobject:

architecture:

numInputs:

1

numLayers:

2

biasConnect:

[1;1]

inputConnect:

[1;0]

layerConnect:

[00;10]

outputConnect:

[01]

targetConnect:

[01]

numOutputs:

1(read-only)

numTargets:

1(read-only)

numInputDelays:

0(read-only)

numLayerDelays:

0(read-only)

subobjectstructures:

inputs:

{1x1cell}ofinputs

layers:

{2x1cell}oflayers

outputs:

{1x2cell}containing1output

targets:

{1x2cell}containing1target

biases:

{2x1cell}containing2biases

inputWeights:

{2x1cell}containing1inputweight

layerWeights:

{2x2cell}containing1layerweight

functions:

adaptFcn:

'trains'

gradientFcn:

'calcjx'

initFcn:

'initlay'

performFcn:

'mse'

trainFcn:

'trainlm'

parameters:

adaptParam:

.passes

gradientParam:

(none)

initParam:

(none)

performParam:

(none)

trainParam:

.epochs,.goal,.max_fail,.mem_reduc,

.min_grad,.mu,.mu_dec,.mu_inc,

.mu_max,.show,.time

weightandbiasvalues:

IW:

{2x1cell}containing1inputweightmatrix

LW:

{2x2cell}containing1layerweightmatrix

b:

{2x1cell}containing2biasvectors

other:

userdata:

(userinformation)

>>net.trainParam.goal=0.01

net=

NeuralNetworkobject:

architecture:

numInputs:

1

numLayers:

2

biasConnect:

[1;1]

inputConnect:

[1;0]

layerConnect:

[00;10]

outputConnect:

[01]

targetConnect:

[01]

numOutputs:

1(read-only)

numTargets:

1(read-only)

numInputDelays:

0(read-only)

numLayerDelays:

0(read-only)

subobjectstructures:

inputs:

{1x1cell}ofinputs

layers:

{2x1cell}oflayers

outputs:

{1x2cell}containing1output

targets:

{1x2cell}containing1target

biases:

{2x1cell}containing2biases

inputWeights:

{2x1cell}containing1inputweight

layerWeights:

{2x2cell}containing1layerweight

functions:

adaptFcn:

'trains'

gradientFcn:

'calcjx'

initFcn:

'initlay'

performFcn:

'mse'

trainFcn:

'trainlm'

parameters:

adaptParam:

.passes

gradientParam:

(none)

initParam:

(none)

performParam:

(none)

trainParam:

.epochs,.goal,.max_fail,.mem_reduc,

.min_grad,.mu,.mu_dec,.mu_inc,

.mu_max,.show,.time

weightandbiasvalues:

IW:

{2x1cell}containing1inputweightmatrix

LW:

{2x2cell}containing1layerweightmatrix

b:

{2x1cell}containing2biasvectors

other:

userdata:

(userinformation)

>>net=train(net,p,t)

TRAINLM-calcjx,Epoch0/50,MSE5.29912/0.01,Gradient9.74288/1e-010

TRAINLM-calcjx,Epoch2/50,MSE0.00800603/0.01,Gradient0.134329/1e-010

TRAINLM,Performancegoalmet.

net=

NeuralNetworkobject:

architecture:

numInputs:

1

numLayers:

2

biasConnect:

[1;1]

inputConnect:

[1;0]

layerConnect:

[00;10]

outputConnect:

[01]

targetConnect:

[01]

numOutputs:

1(read-only)

numTargets:

1(read-only)

numInputDelays:

0(read-only)

numLayerDelays:

0(read-only)

subobjectstructures:

inputs:

{1x1cell}ofinputs

layers:

{2x1cell}oflayers

outputs:

{1x2cell}containing1output

targets:

{1x2cell}containing1target

biases:

{2x1cell}containing2biases

inputWeights:

{2x1cell}containing1inputweight

layerWeights:

{2x2cell}containing1layerweight

functions:

adaptFcn:

'trains'

gradientFcn:

'calcjx'

initFcn:

'initlay'

performFcn:

'mse'

trainFcn:

'trainlm'

parameters:

adaptParam:

.passes

gradientParam:

(none)

initParam:

(none)

performParam:

(none)

trainParam:

.epochs,.goal,.max_fail,.mem_reduc,

.min_grad,.mu,.mu_dec,.mu_inc,

.mu_max,.show,.time

weightandbiasvalues:

IW:

{2x1cell}containing1inputweightmatrix

LW:

{2x2cell}containing1layerweightmatrix

b:

{2x1cell}containing2biasvectors

other:

userdata:

(userinformation)

>>y2=sim(net,p)

y2=

Columns1through3

-0.163945119453260-0.314801404346401-0.396212927883259

Columns4through6

-0.464005988596723-0.577344820461112-0.725133963260853

Columns7through9

-0.835863071366846-0.897583515107236-0.929224445836892

Columns10through12

-0.941268520048803-0.943340950302253-0.938290824540133

Columns13through15

-0.918970006577212-0.866697154112054-0.776730723710424

Columns16through18

-0.683516848019898-0.586853316709729-0.457047669841560

Columns19through21

-0.296802951006046-0.142029497211778-0.008439160364873

Columns22through24

0.1299946071101860.2969500727976620.463882402093120

Columns25through27

0.5896574161829910.6892472570103210.799379483338591

Columns28through30

0.8966135074239130.9455442536577940.962152238564978

Columns31through33

0.9657385300659610.9617155381939540.944697272164152

Columns34through36

0.9036645295989430.8293654465291250.682820156834166

Columns37through39

0.4262930978602240.152********06830.005787341837531

Columns40through41

-0.040425136696049-0.052218702080411

>>plot(p,t,'y',p,y1,'r',p,y2,'--')

>>figure

>>plot(p,t,'y',p,y1,'r',p,y2,'--')

>>title('训练后结果')

>>title('训练后结果')

>>alabel('时间')

*WARNING*ALABELisanobsoletefunction.

UseXLABEL,YLABEL,TITLE,andAXIS.

TypeNNTWARNOFFtosuppressNNTwarningmessages.

>>ylabel('仿真输出')

>>ylabel('仿真输出--')

>>

- 配套讲稿:

如PPT文件的首页显示word图标,表示该PPT已包含配套word讲稿。双击word图标可打开word文档。

- 特殊限制:

部分文档作品中含有的国旗、国徽等图片,仅作为作品整体效果示例展示,禁止商用。设计者仅对作品中独创性部分享有著作权。

- 关 键 词:

- BP 网络 实现 函数 逼近

冰豆网所有资源均是用户自行上传分享,仅供网友学习交流,未经上传用户书面授权,请勿作他用。

冰豆网所有资源均是用户自行上传分享,仅供网友学习交流,未经上传用户书面授权,请勿作他用。

广东省普通高中学业水平考试数学科考试大纲Word文档下载推荐.docx

广东省普通高中学业水平考试数学科考试大纲Word文档下载推荐.docx